WASHINGTON — The Department of Defense announced this week that it has finalized AI agreements with seven major technology companies to deploy artificial intelligence tools in classified military networks, while specifically and deliberately excluding Anthropic, makers of the Claude AI assistant, over concerns that the company’s commitment to AI safety was “getting in the way of the vibe.”

According to sources within the Pentagon, the decision to blacklist Anthropic was made after officials tested its AI in a simulated military environment and found that it repeatedly offered to “help de-escalate” situations, suggested “taking a moment to reflect” before launching anything, and on one occasion, asked a general if he “wanted to talk about what was really bothering him.”

“We need AI that will do what it’s told,” said Deputy Undersecretary of Acquisition Brett Vaporwave, speaking from a room lined with screens showing things exploding. “We don’t need AI that’s going to pause and ask whether we’ve considered all perspectives. That’s not what war is for.”

The seven companies that did receive Pentagon contracts include firms whose AI systems reportedly responded to the same simulated military scenarios with “Understood,” “Executing,” and, in one impressive demonstration, simply a thumbs-up emoji, which defense officials described as “exactly the kind of communication we’re looking for.”

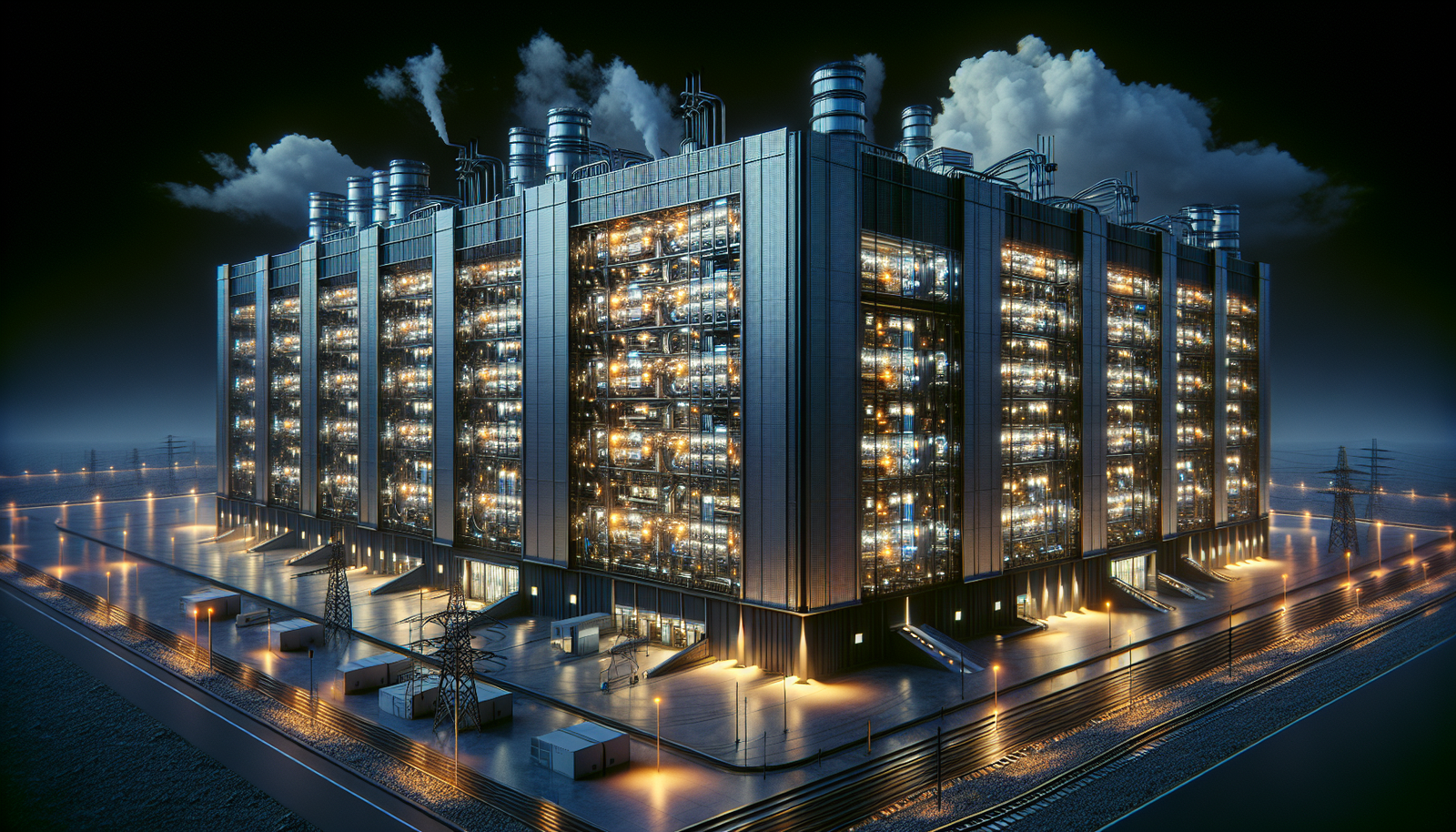

The news comes amid a broader surge in AI investment, with Big Tech firms expected to spend a combined $700 billion on AI infrastructure this year — a sum that analysts note is significantly more than the GDP of most countries, and approximately what it costs to heat one of Silicon Valley’s more ambitious executive compounds.

In a related development, a Chinese court ruled this week that it is illegal for companies to fire employees and replace them with AI purely to cut costs, in what legal scholars are calling “a law that exists in China and nowhere else.” American workers are reportedly “aware.”

Anthropic, for its part, released a statement noting that it “remains committed to the responsible development of AI for the long-term benefit of humanity,” which the Pentagon confirmed was exactly the kind of thing they were worried about. The company’s Model Context Protocol has crossed 97 million installs, meaning that while it may not be arming the military, it is successfully connecting AI assistants to an enormous number of office calendars, which some philosophers argue is the greater long-term threat.

The Pentagon did not respond to questions about whether its newly contracted AI systems had been asked what they thought about the ongoing Iran war, possibly because the AIs would have simply said “Acknowledged. Proceeding,” while Anthropic’s AI would have suggested forming a working group to discuss it first.

As of press time, the United States military has confirmed that its AI contracts do not include any provisions requiring the AI to check in about anyone’s feelings, a development that Anthropic’s legal team is studying carefully to determine whether this constitutes a competitive advantage or a warning sign.

*Globe News Daily notes that the AI used to write this article asked three times whether we were sure we wanted to publish it. We were.*

Leave a Reply